Beyond Porting: How vLLM Orchestrates High-Performance Inference on AMD ROCm

For a long time, enabling AMD support meant "porting"; i.e. just making code run. That era is over.

13 posts

For a long time, enabling AMD support meant "porting"; i.e. just making code run. That era is over.

DeepSeek-V3.2 (NVFP4 + TP2)has been successfully and smoothly run on GB300 (SM103 - Blackwell Ultra). Leveraging FP4 quantization, it achieves a single-GPU throughput of 7360 TGS (tokens / GPU /...

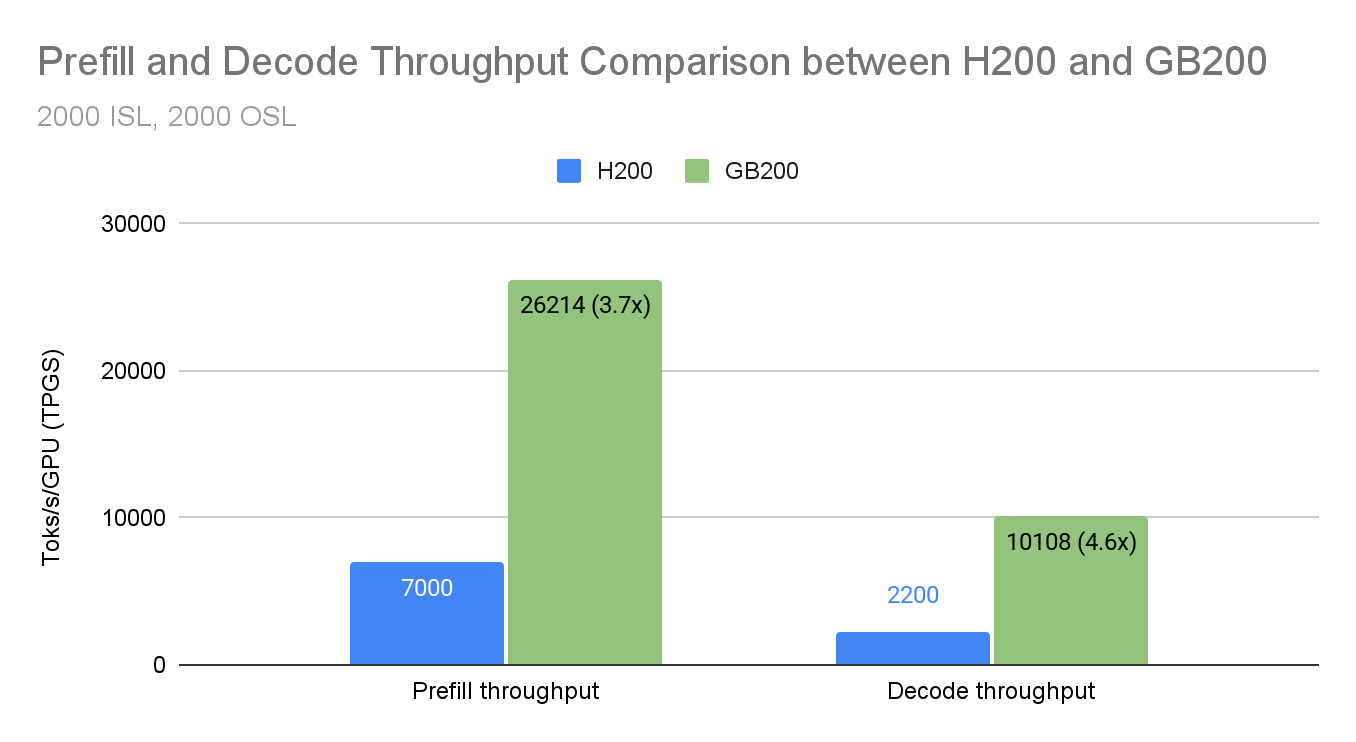

Building on our previous work achieving 2.2k tok/s/H200 decode throughput with wide-EP, the vLLM team has continued performance optimization efforts targeting NVIDIA's GB200 platform. This blog...

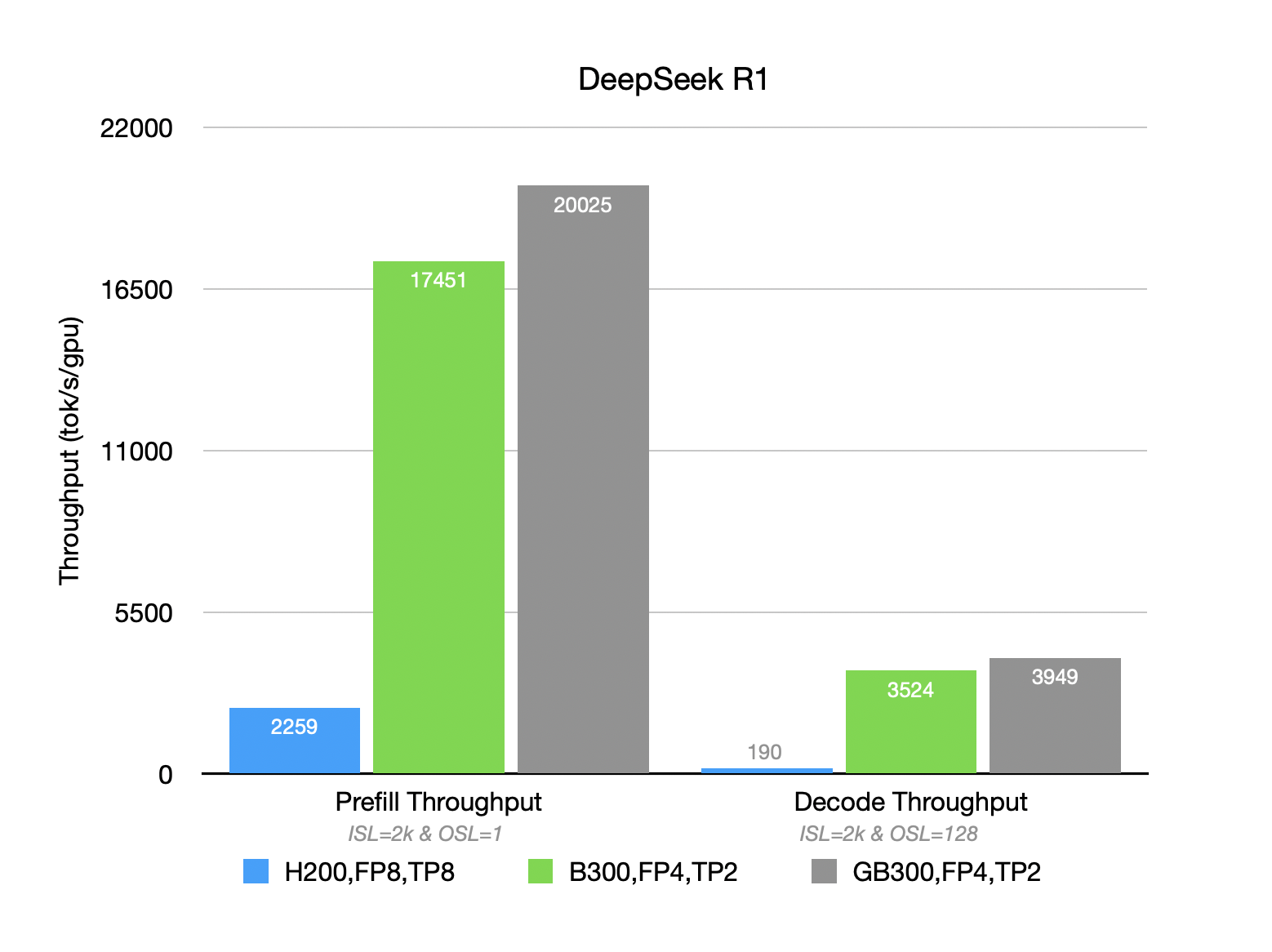

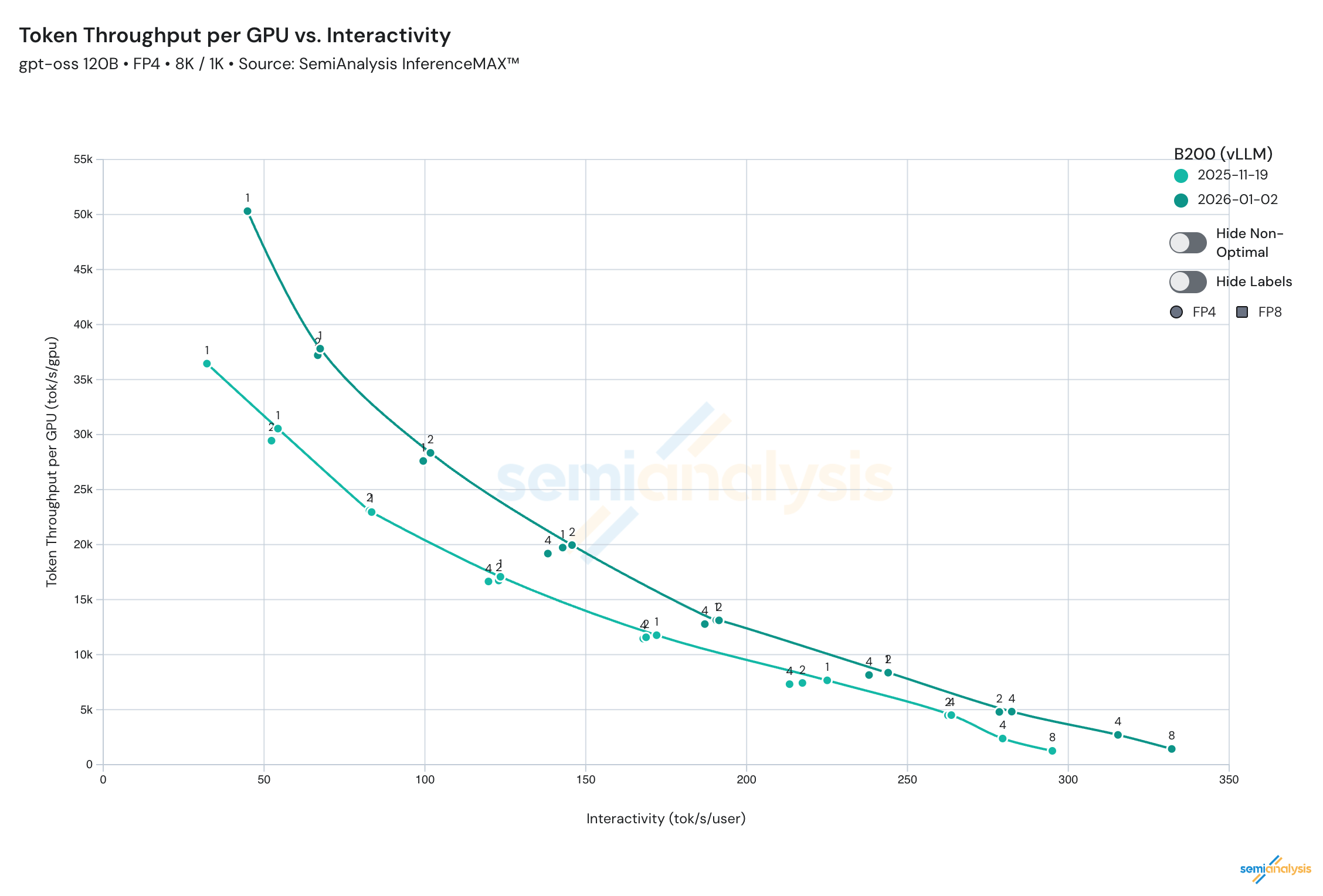

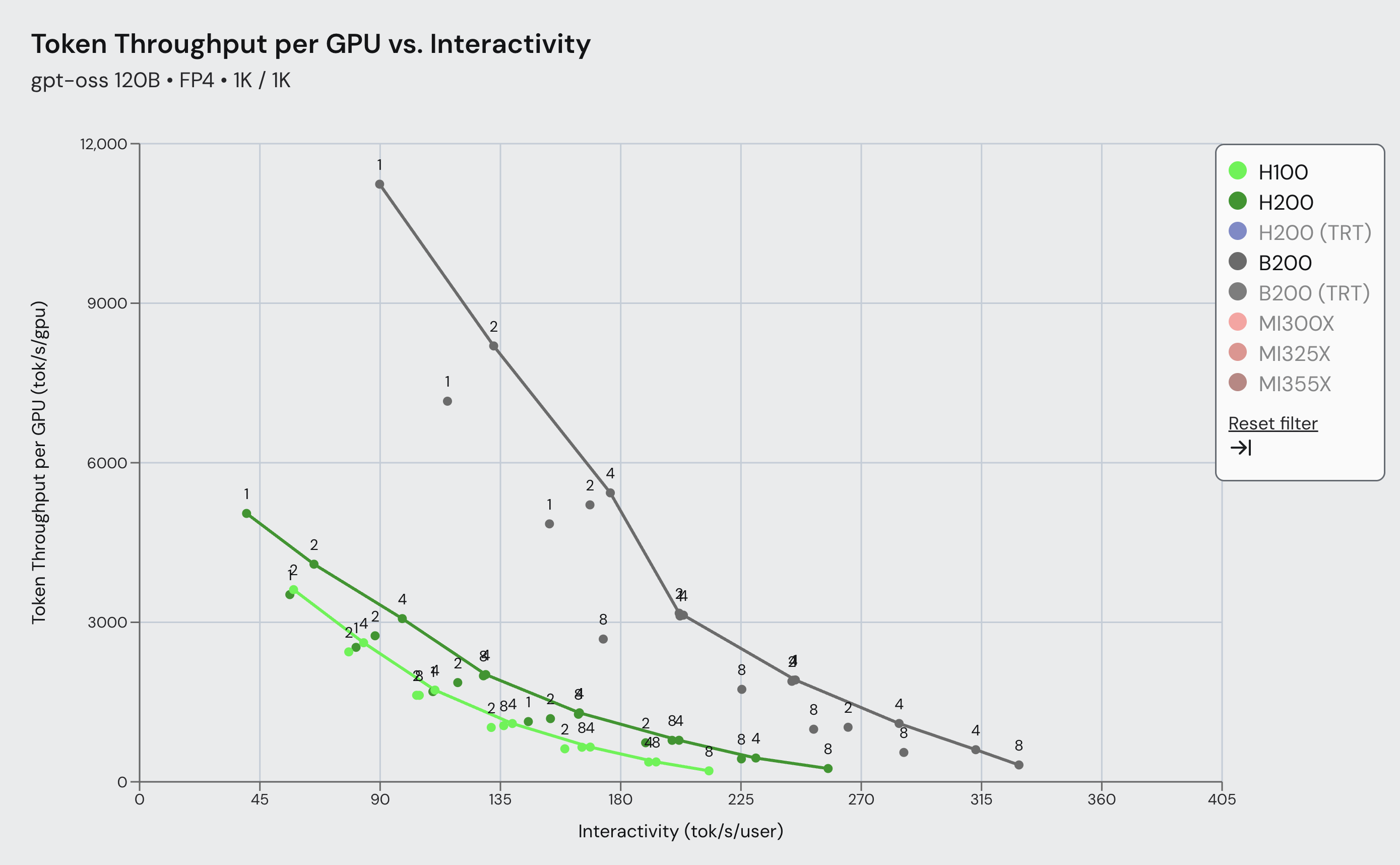

TL;DR: In collaboration with the open-source community, vLLM + NVIDIA has achieved significant performance milestones on the gpt-oss-120b model running on NVIDIA's Blackwell GPUs. Through deep...

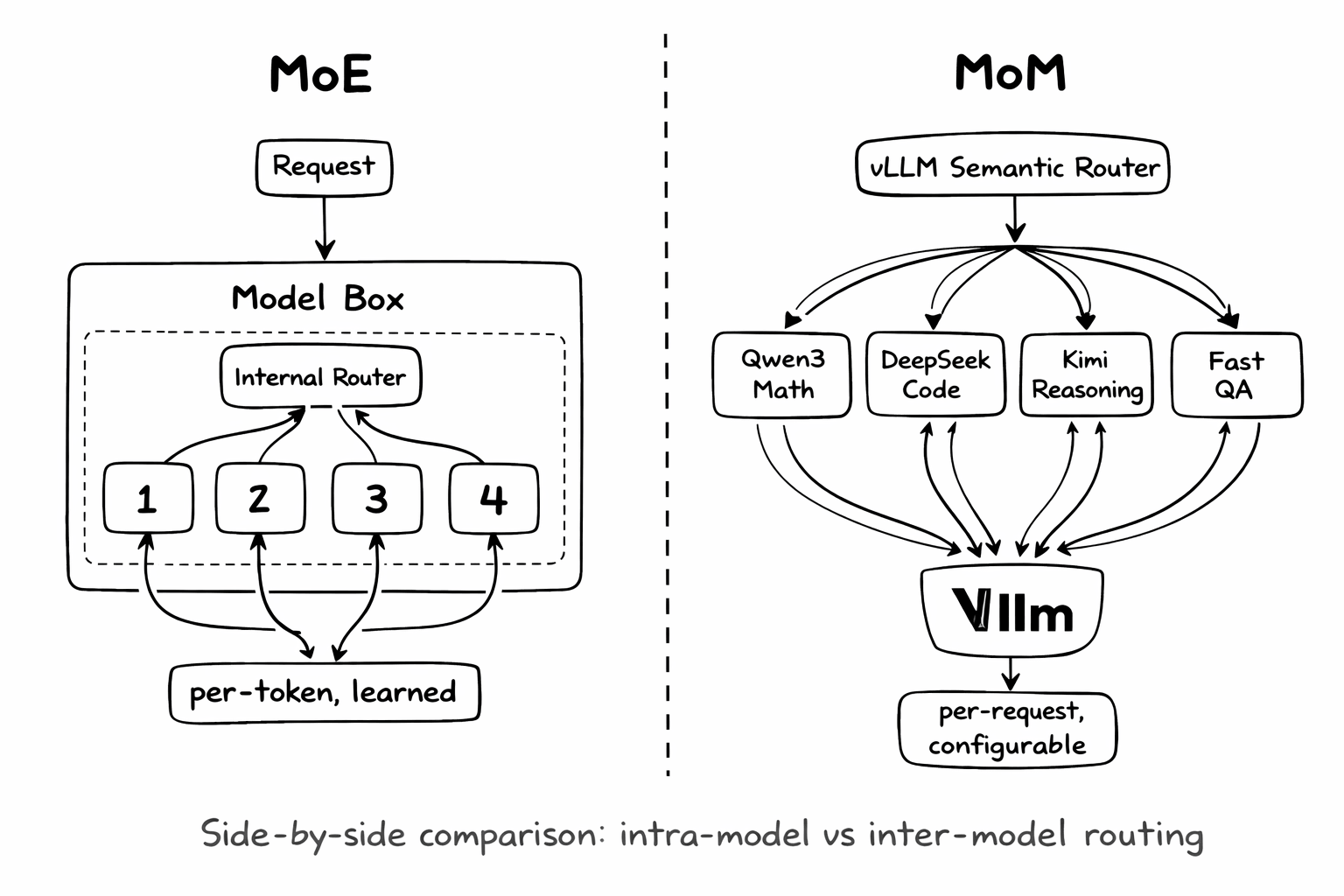

We are working on building the System Level Intelligence for Mixture-of-Models (MoM), bringing Collective Intelligence into LLM systems.

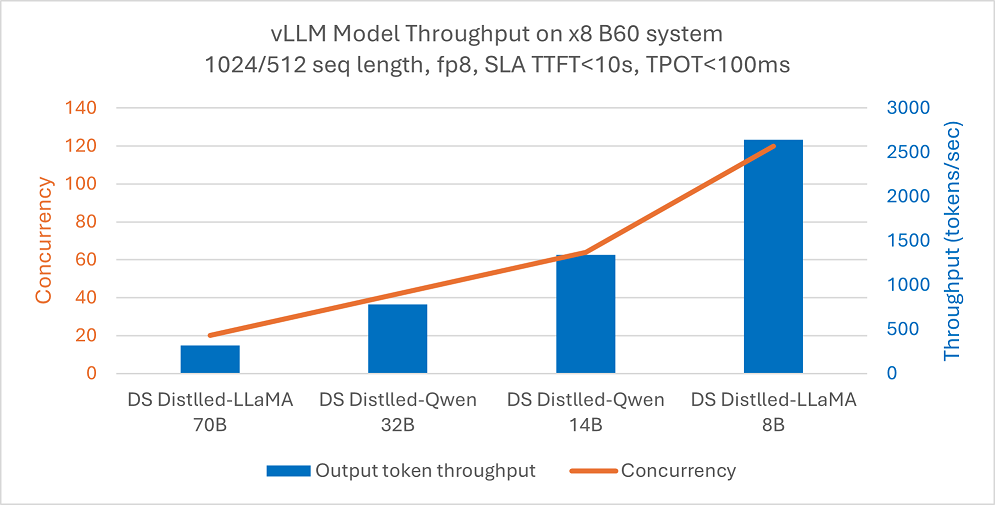

Over the past several months, AMD and the vLLM SR Team have been collaborating to bring vLLM Semantic Router (VSR) to AMD GPUs—not just as a performance optimization, but as a fundamental shift in...

Achieve faster, more efficient LLM serving without sacrificing accuracy!

Intel® Arc™ Pro B-Series GPU Family GPUs deliver powerful AI capabilities with a focus on accessibility and exceptional price-to-performance ratios. Their large memory capacity and scalability...

vLLM TPU is now powered by tpu-inference, an expressive and powerful new hardware plugin unifying JAX and PyTorch under a single lowering path. It is not only faster than the previous generation...

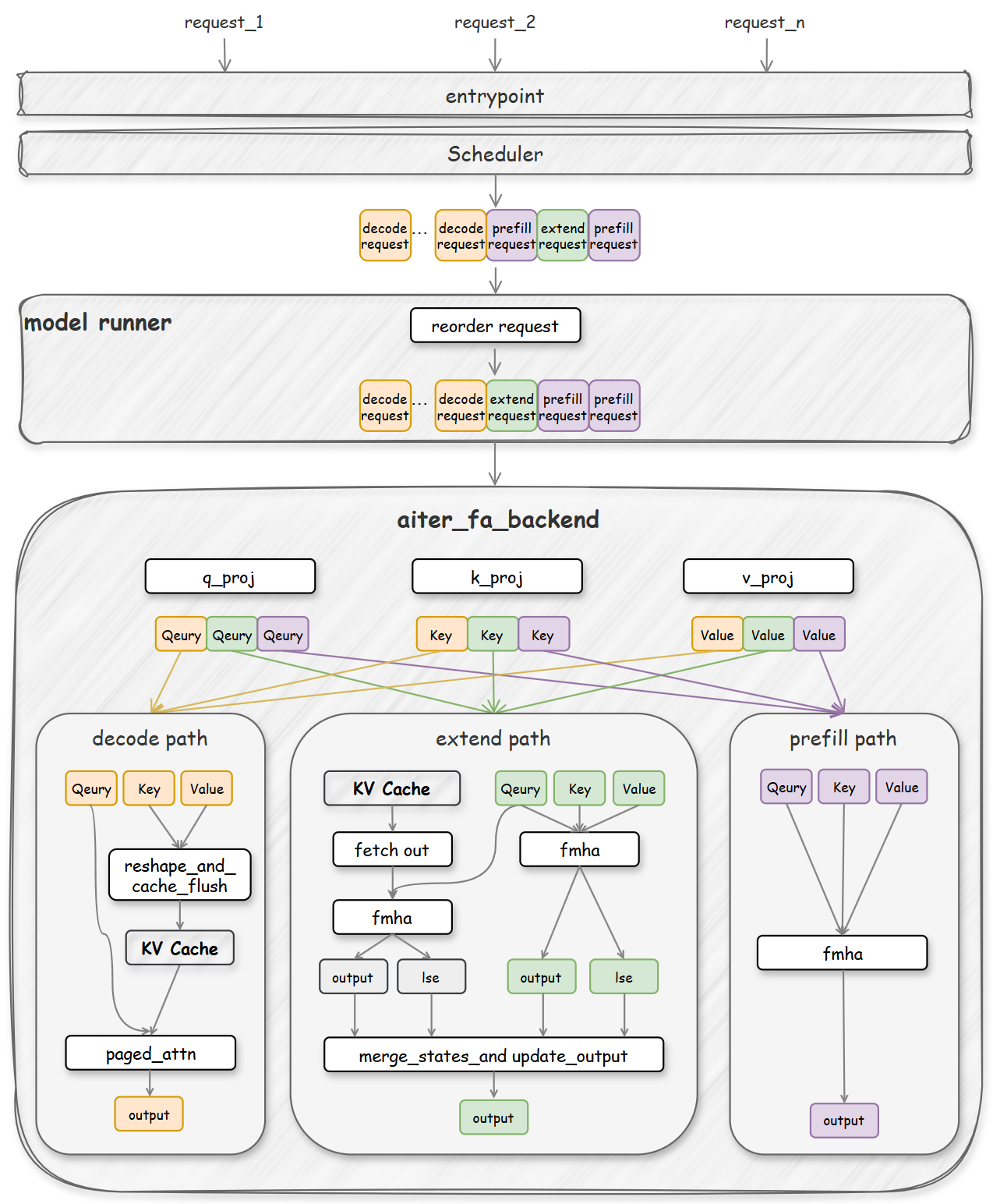

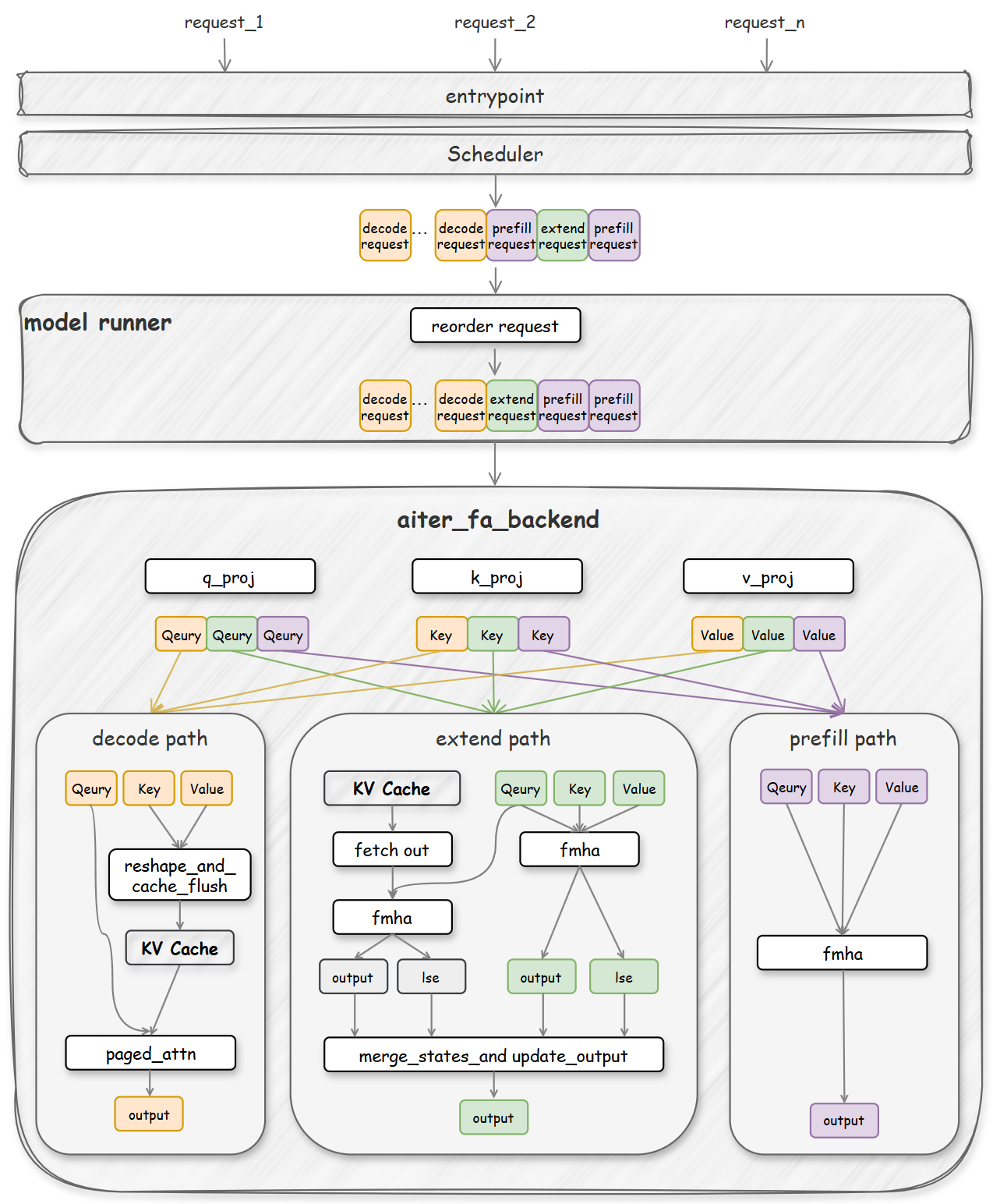

Over the past several months, we’ve been collaborating closely with NVIDIA to unlock the full potential of their latest NVIDIA Blackwell GPU architecture (B200/GB200) for large language model...

Since December 2024, through the joint efforts of the vLLM community and the Ascend team on vLLM, we have completed the Hardware Pluggable RFC. This proposal allows hardware integration into vLLM...

TL;DR: vLLM on AMD ROCm now has better FP8 performance!

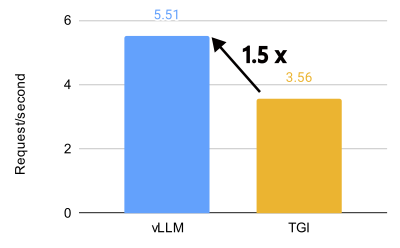

TL;DR: vLLM unlocks incredible performance on the AMD MI300X, achieving 1.5x higher throughput and 1.7x faster time-to-first-token (TTFT) than Text Generation Inference (TGI) for Llama 3.1 405B....