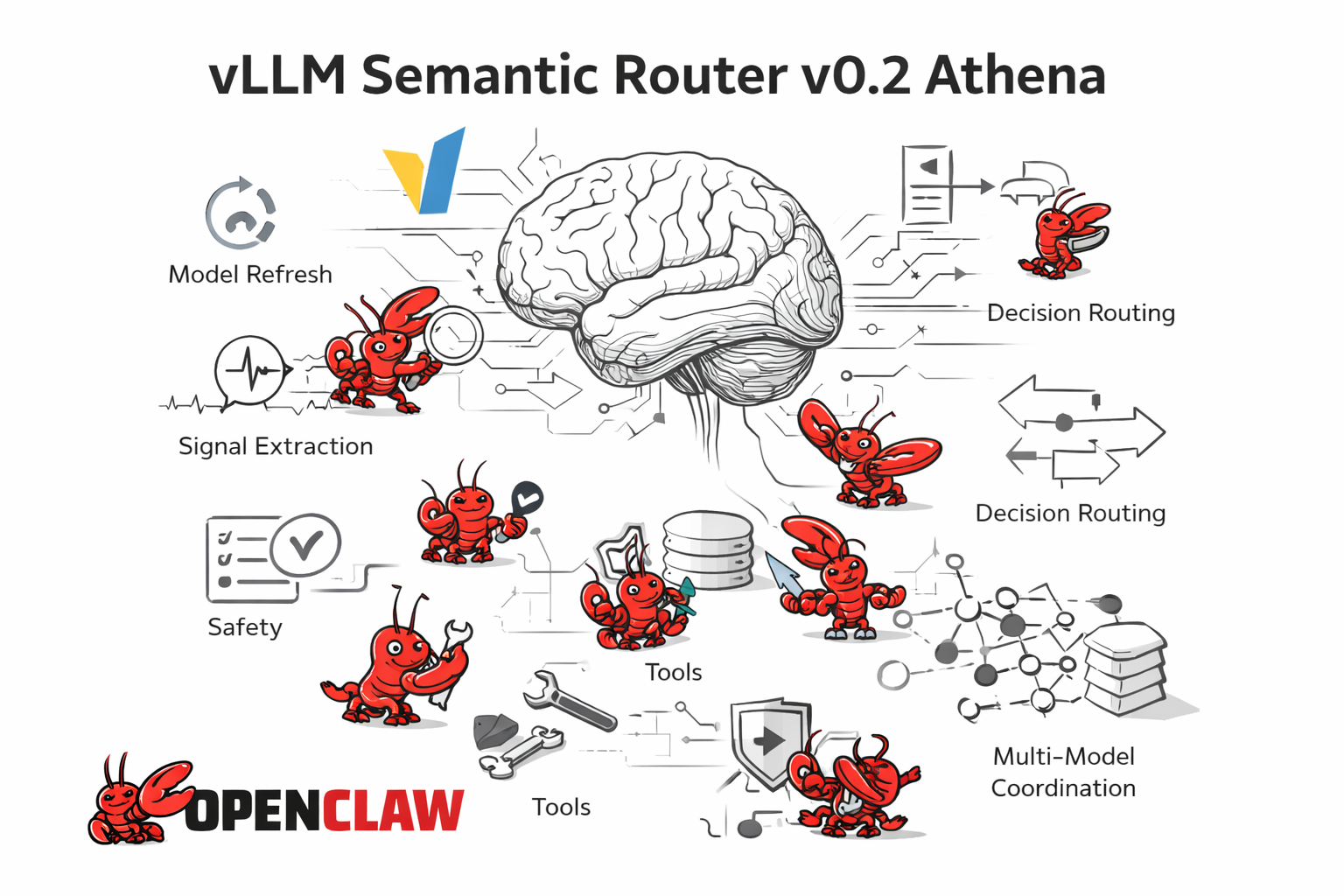

Inside vLLM: Anatomy of a High-Throughput LLM Inference System

In this post, I'll gradually introduce all of the core system components and advanced features that make up a modern high-throughput LLM inference system. In particular I'll be doing a breakdown...