Run Highly Efficient Multimodal Agentic AI with NVIDIA Nemotron 3 Nano Omni Using vLLM

We are excited to support the newly released NVIDIA Nemotron 3 Nano Omni model on vLLM.

17 posts

We are excited to support the newly released NVIDIA Nemotron 3 Nano Omni model on vLLM.

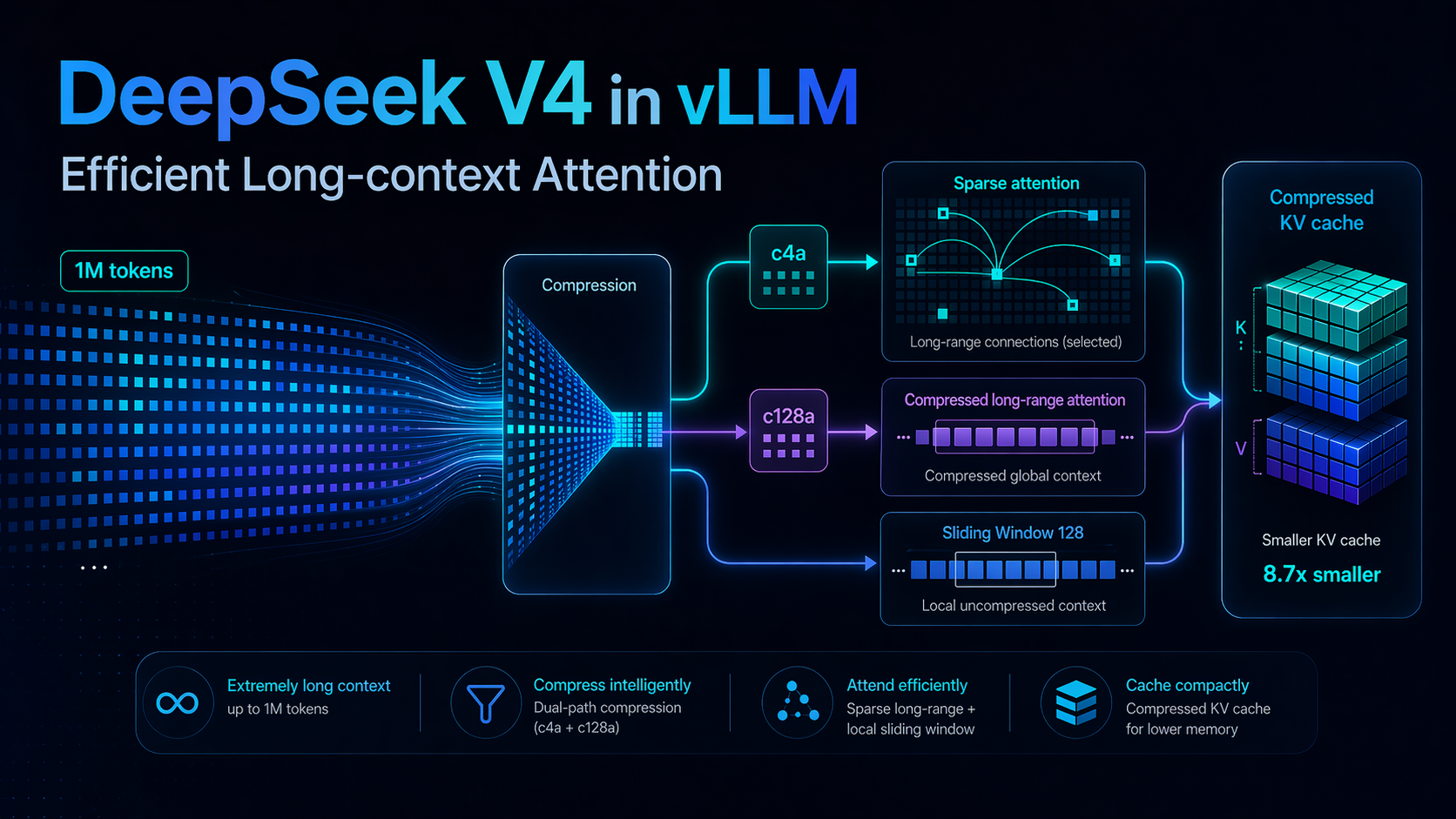

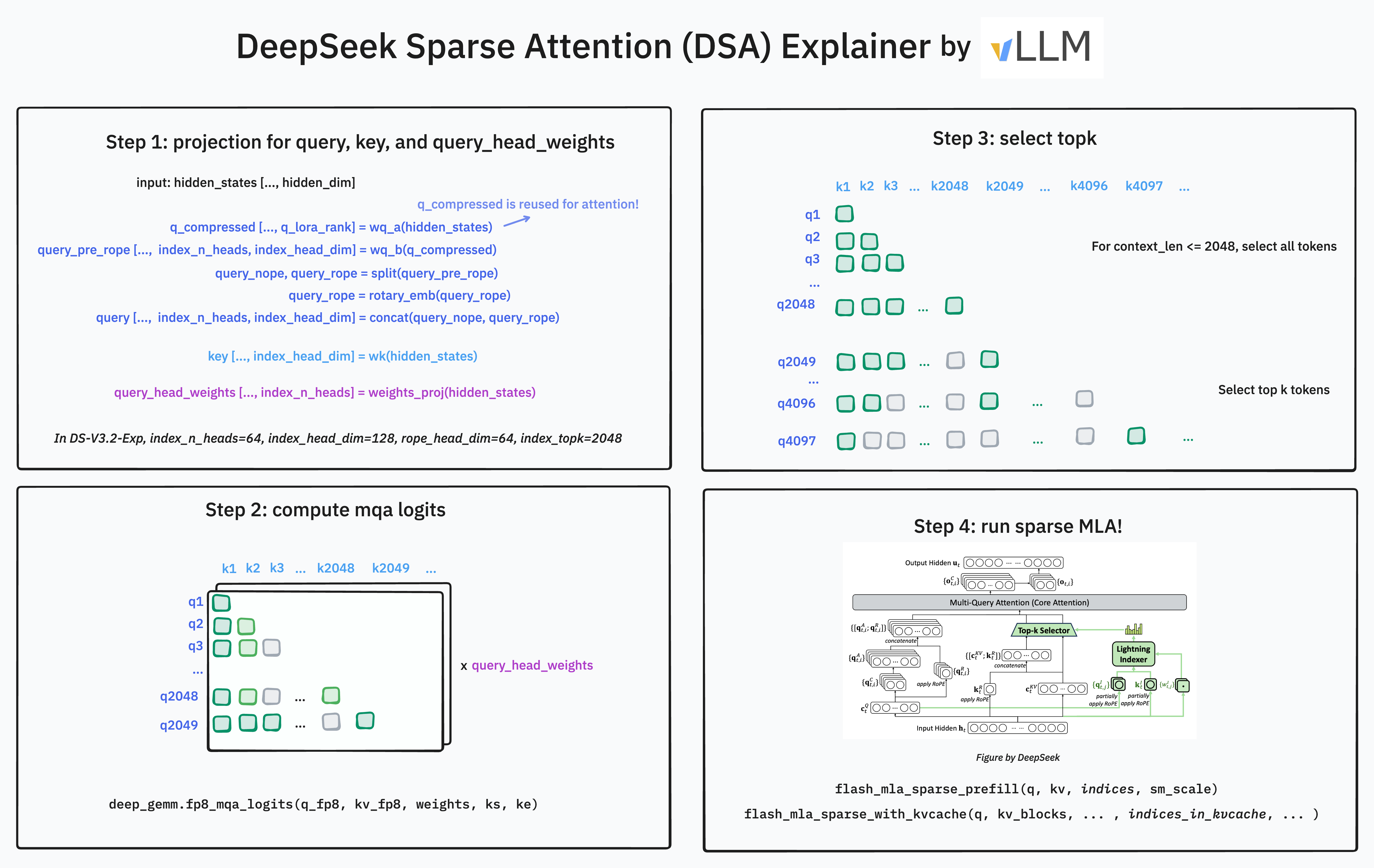

A first-principles walkthrough of DeepSeek V4's long-context attention, and how we implemented it in vLLM.

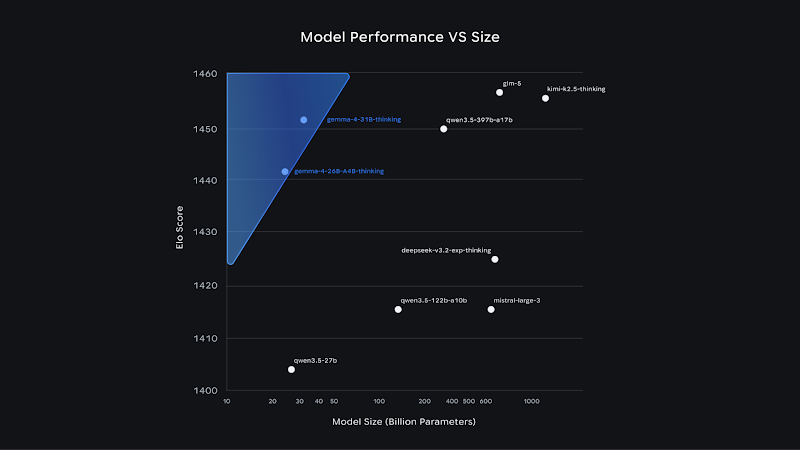

With the debut of Gemma 4, vLLM introduces immediate support for Google's most sophisticated open model lineup, spanning multiple hardware backends, with first-ever Day 0 support on Google TPUs,...

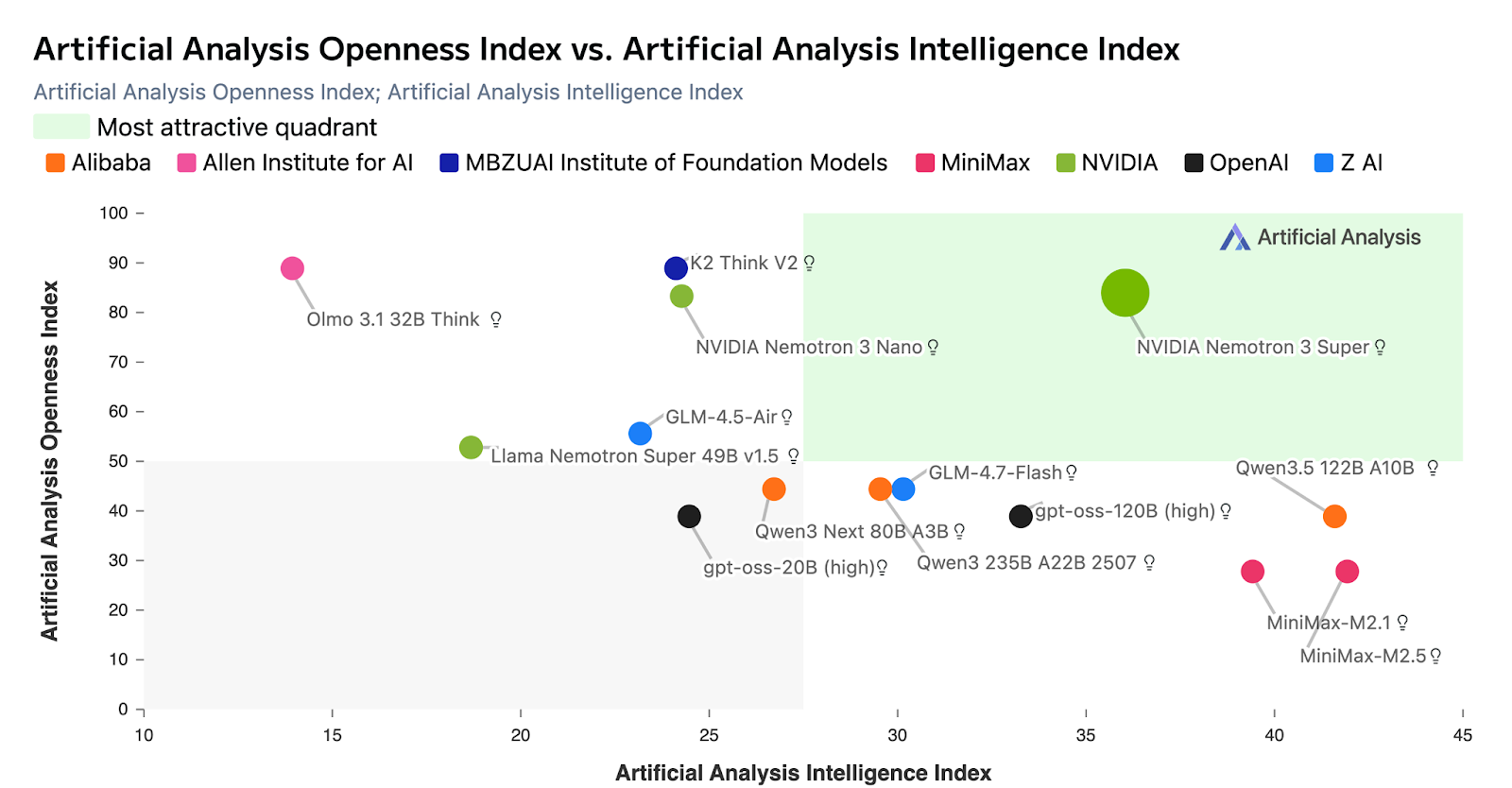

We are excited to support the newly released NVIDIA Nemotron 3 Super model on vLLM.

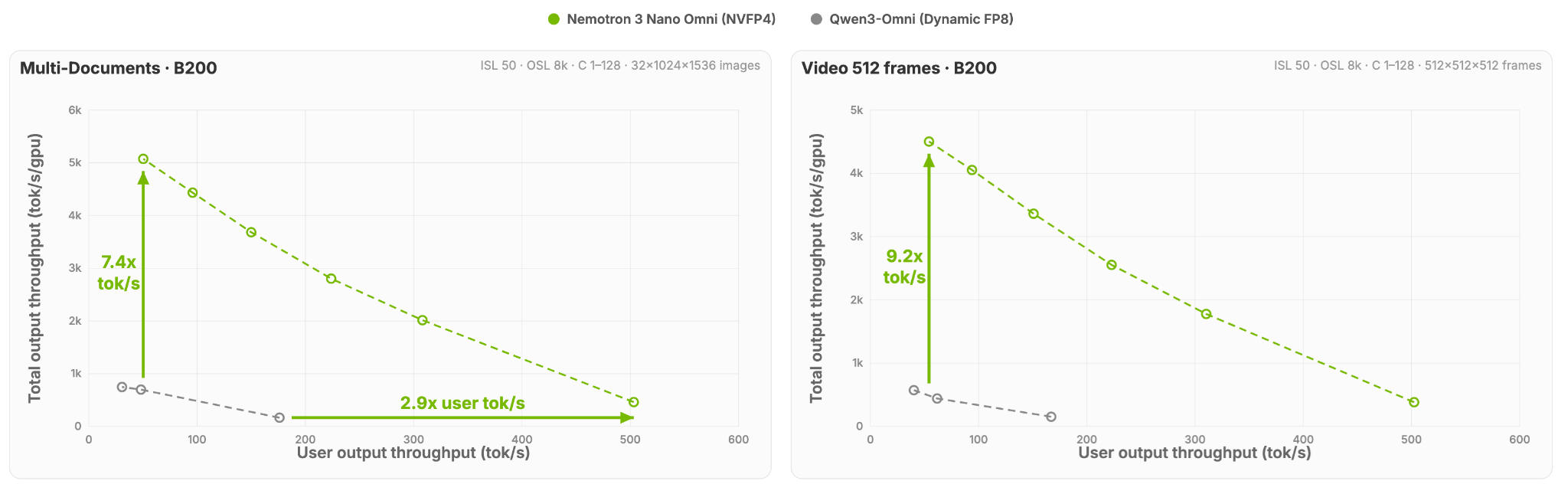

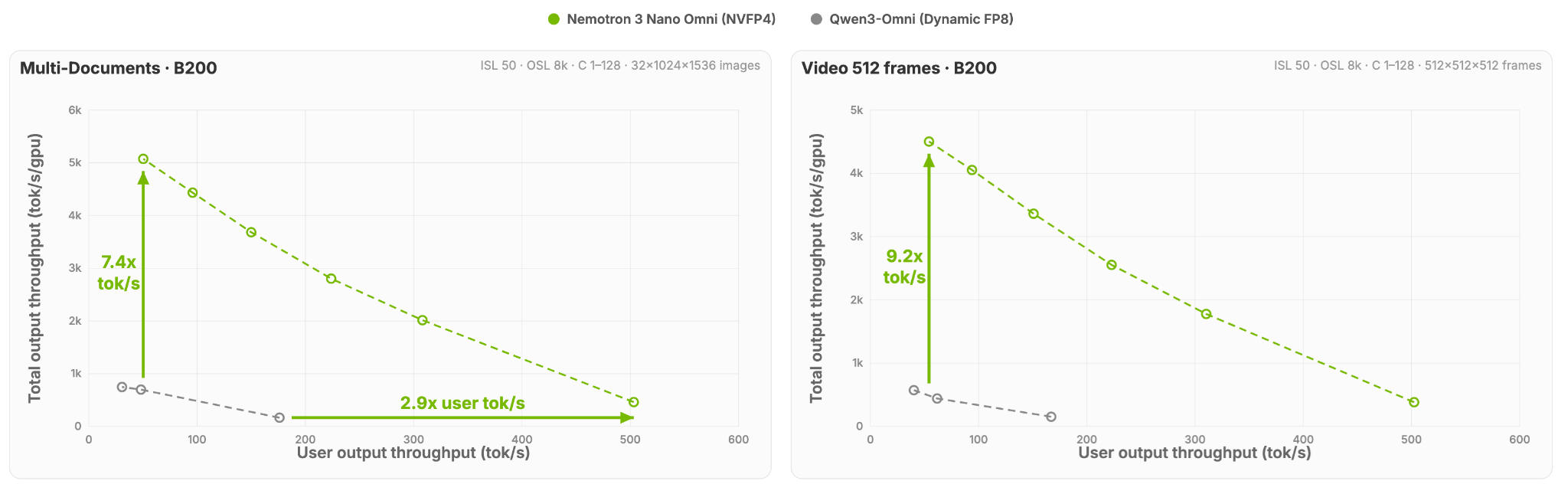

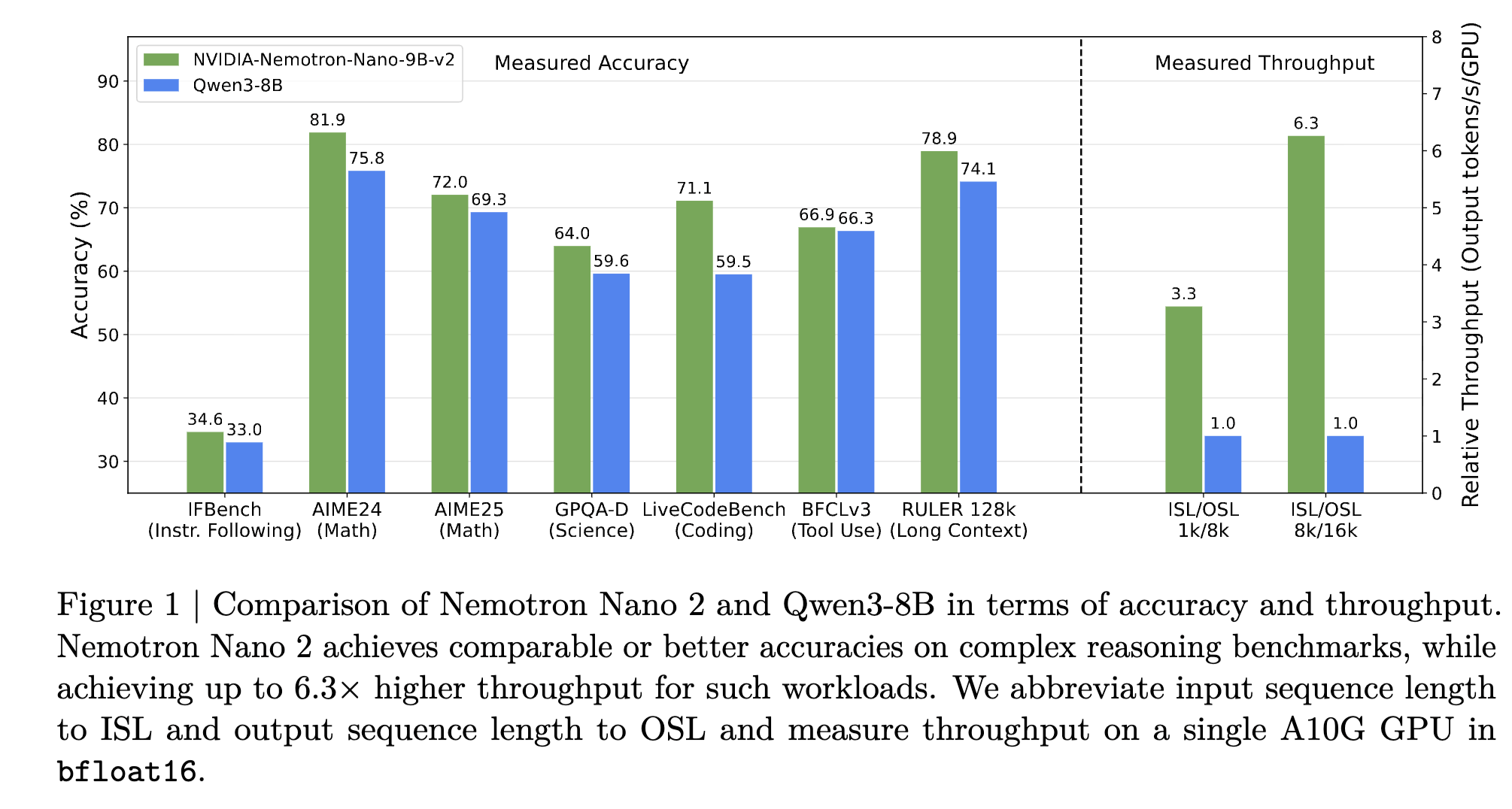

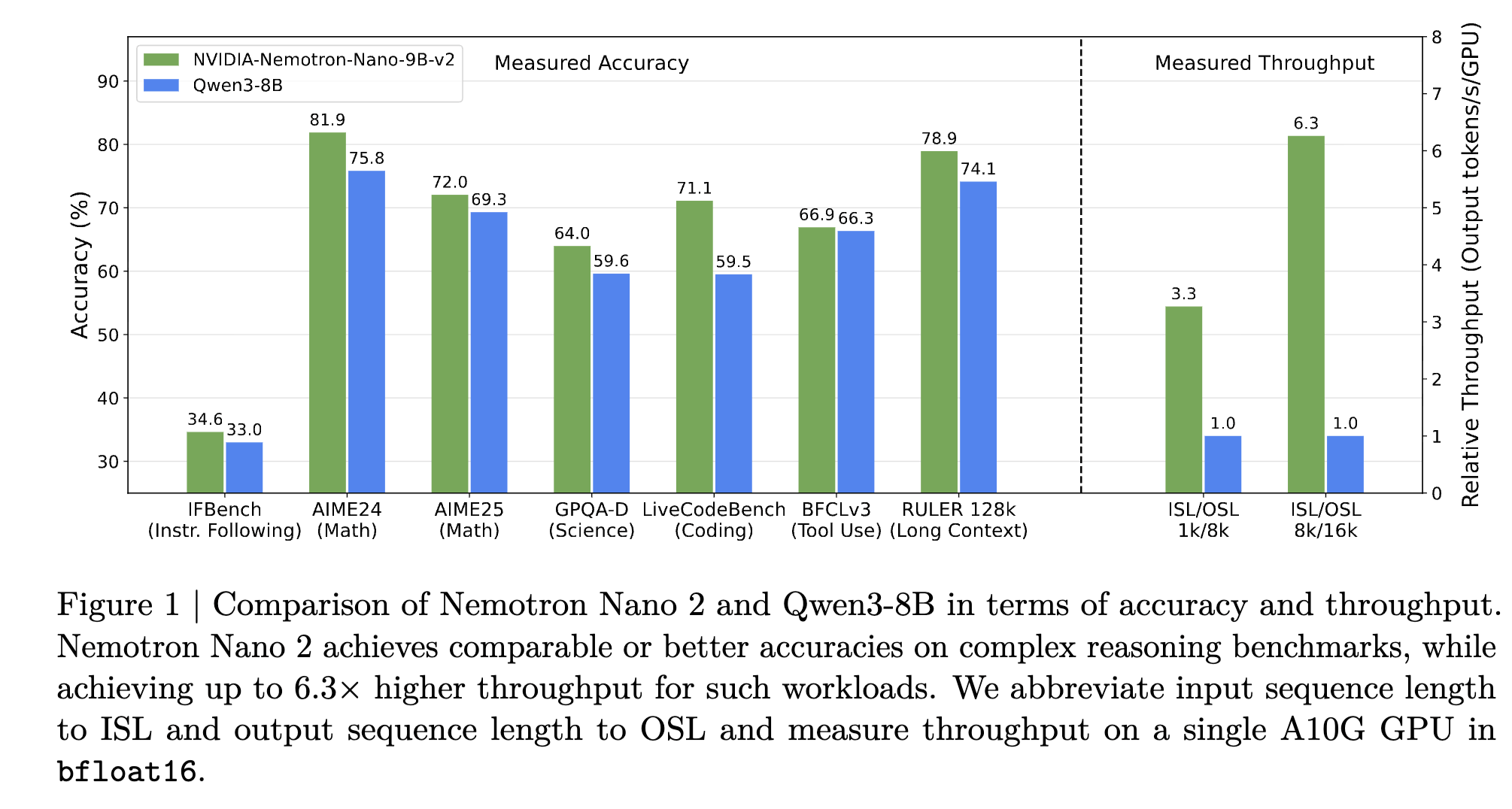

Jan 28th Update: NVIDIA just released their Nemotron 3 Nano model in NVFP4 precision. This model is supported by vLLM out of the box and it uses a new method called Quantization-Aware Distillation...

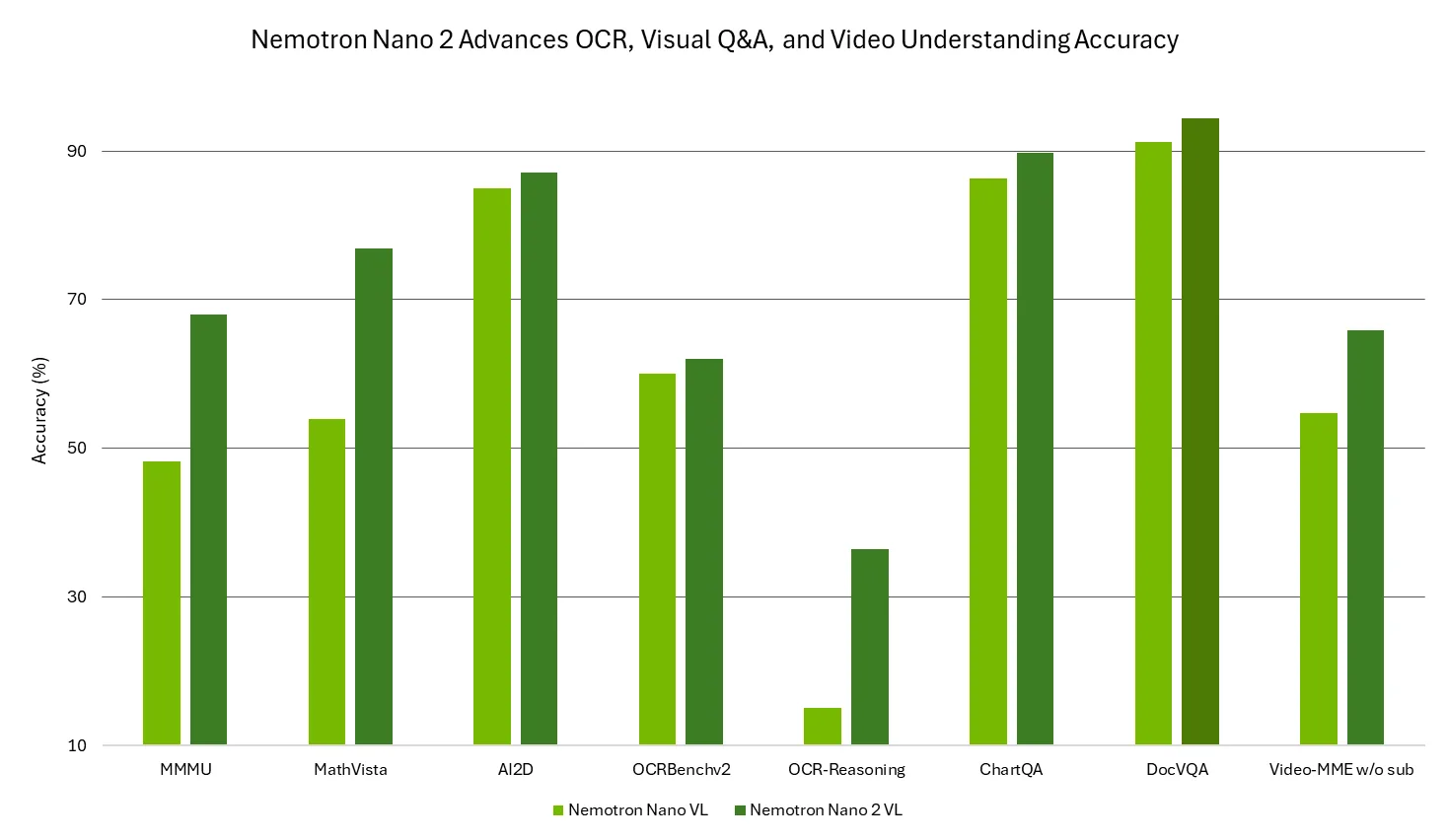

We are excited to release NVIDIA Nemotron Nano 2 VL, supported by vLLM. This open vision language model (VLM) is built for video understanding and document intelligence.

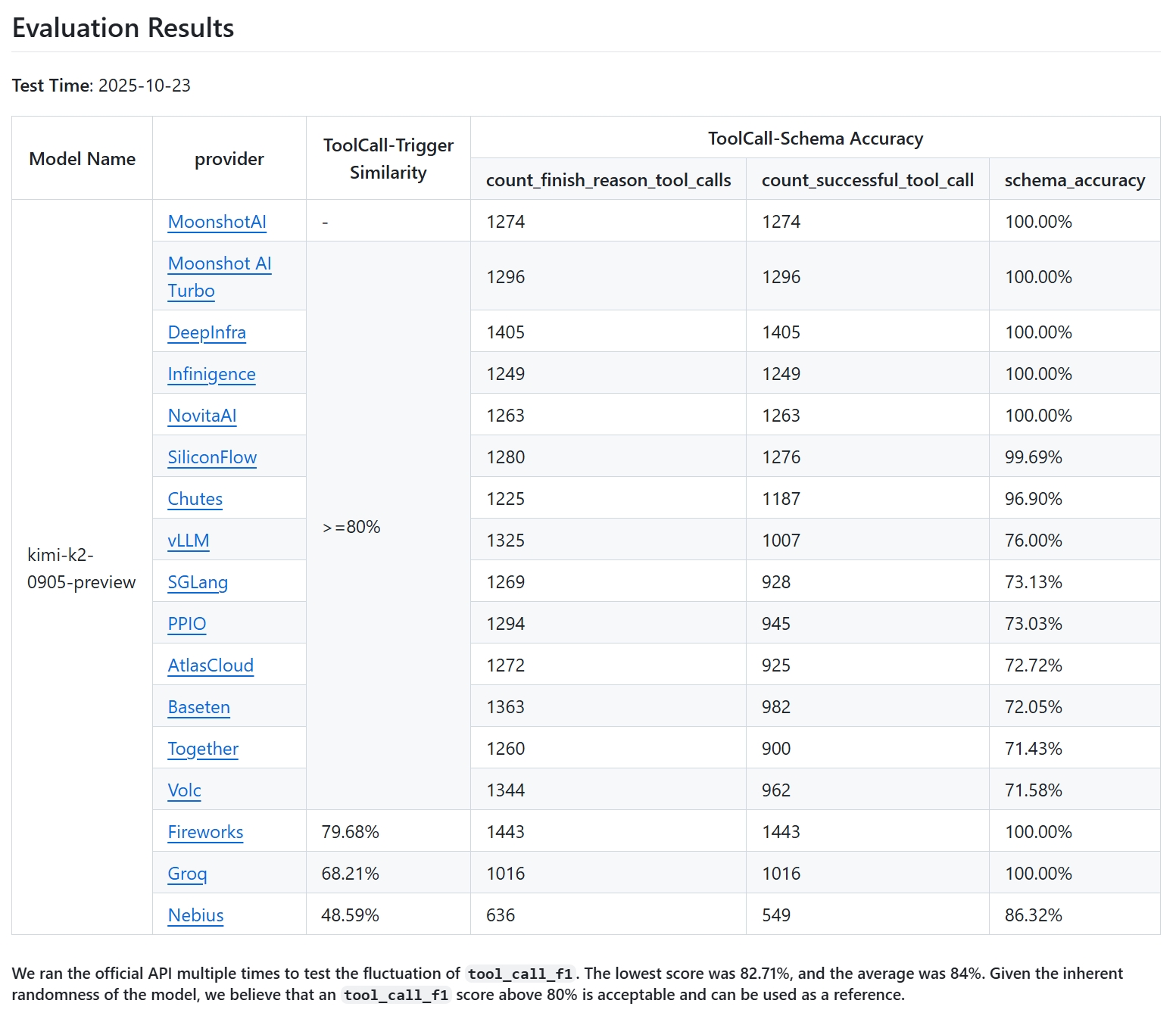

TL;DR: For best compatibility with vLLM, use Kimi K2 models whose chat templates were updated after commit 94a4053eb8863059dd8afc00937f054e1365abbd (Kimi-K2-0905) or commit...

Agentic AI systems, capable of reasoning, planning, and taking autonomous actions, are powering the next leap in developer applications. To build these systems, developers need tools that are...

We are excited to announce Day 0 support for DeepSeek-V3.2-Exp, featuring DeepSeek Sparse Attention (DSA) (paper) designed for long context tasks. In this post, we showcase how to use this model...

We’re excited to announce that vLLM now supports Qwen3-Next, the latest generation of foundation models from the Qwen team. Qwen3-Next introduces a hybrid architecture with extreme efficiency for...

General Language Model (GLM) is a family of foundation models created by Zhipu.ai (now renamed to Z.ai). The GLM team has long-term collaboration with vLLM team, dating back to the early days of...

We're thrilled to announce that vLLM now supports gpt-oss on NVIDIA Blackwell and Hopper GPUs, as well as AMD MI300x and MI355x GPUs. In this blog post, we’ll explore the efficient model...

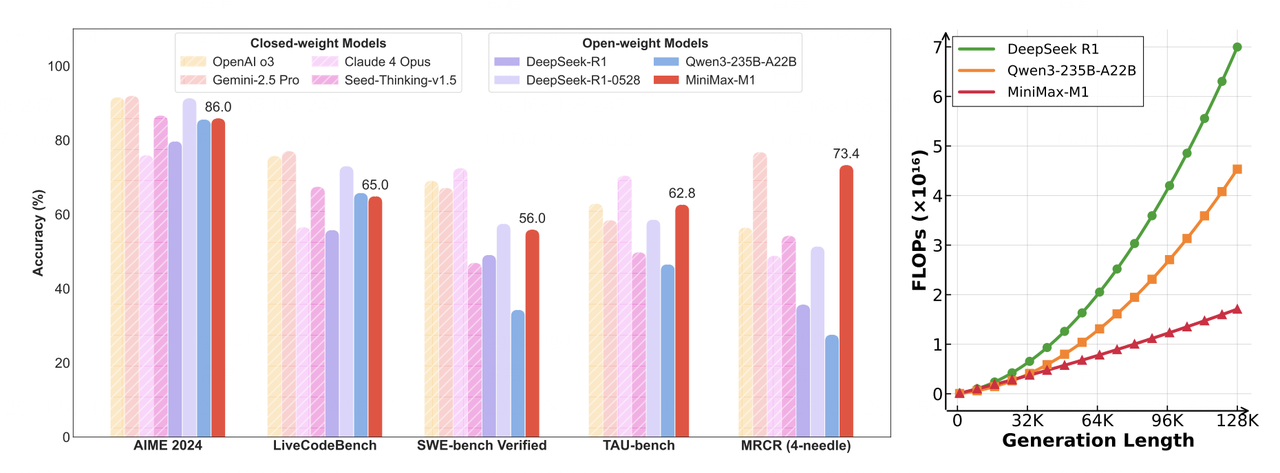

This article explores how MiniMax-M1's hybrid architecture is efficiently supported in vLLM. We discuss the model's unique features, the challenges of efficient inference, and the technical...

The Hugging Face Transformers library offers a flexible, unified interface to a vast ecosystem of model architectures. From research to fine-tuning on custom dataset, Transformers is the go-to...

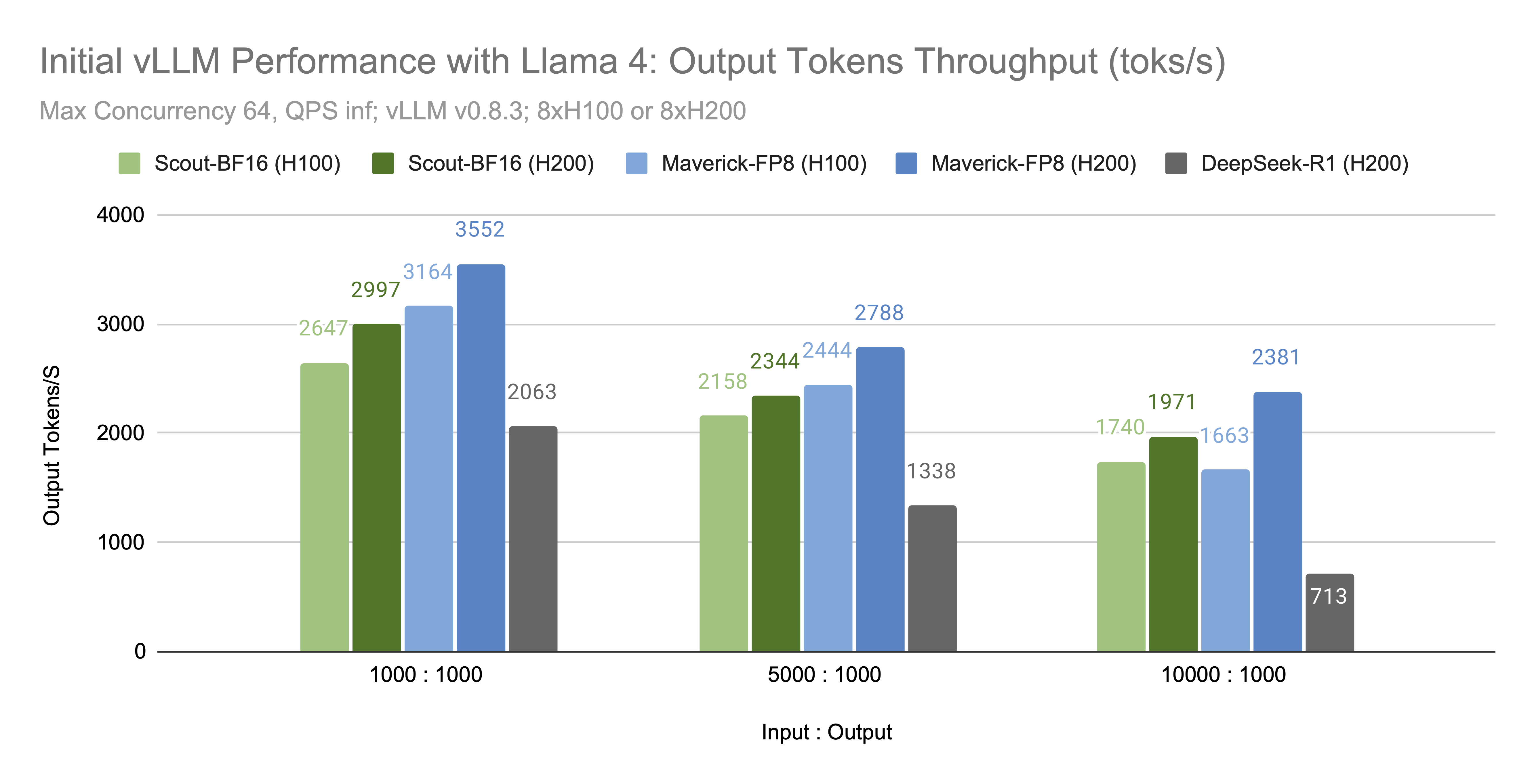

We're excited to announce that vLLM now supports the Llama 4 herd of models: Scout (17B-16E) and Maverick (17B-128E). You can run these powerful long-context, natively multi-modal (up to 8-10...

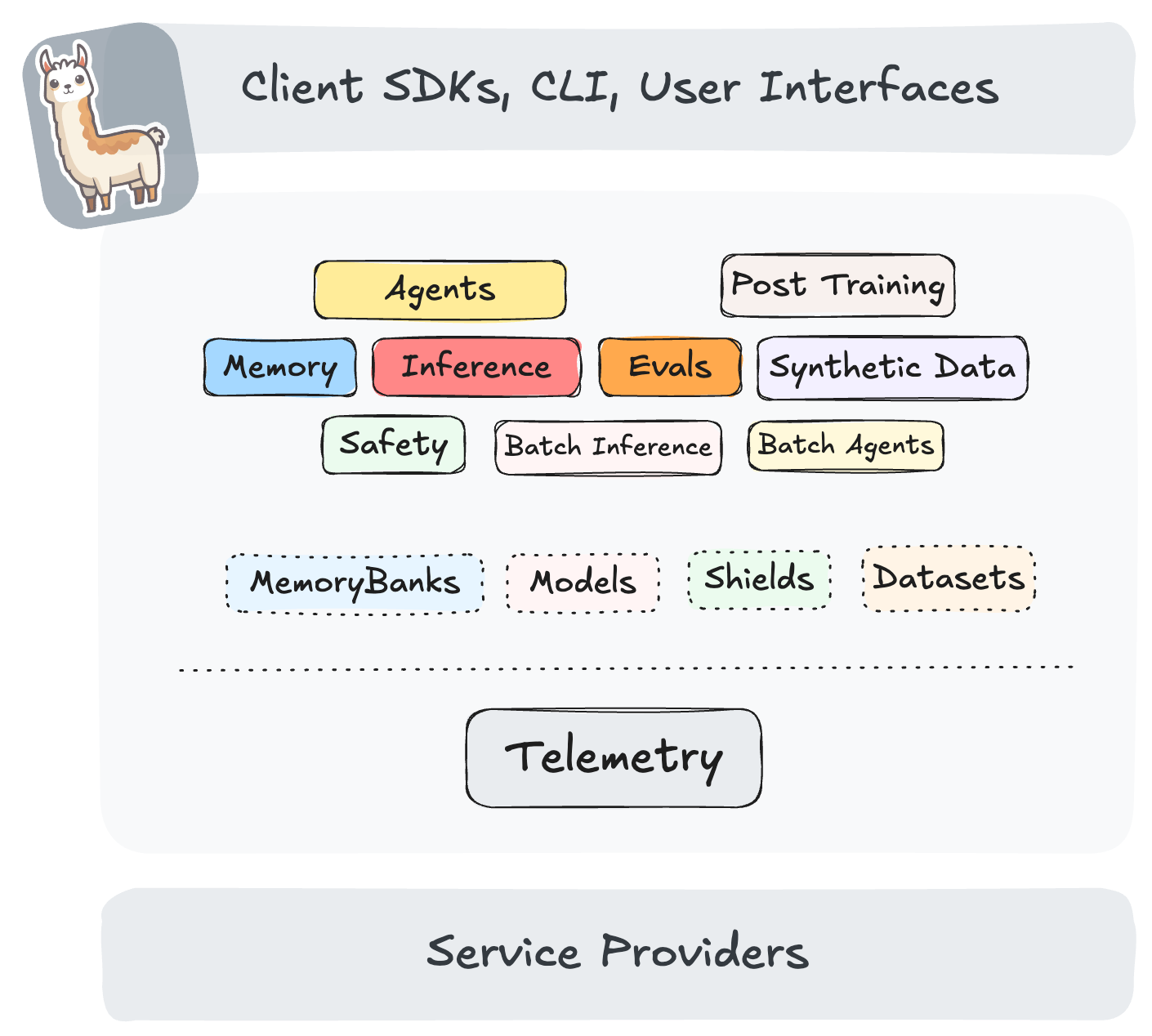

We are excited to announce that vLLM inference provider is now available in Llama Stack through the collaboration between the Red Hat AI Engineering team and the Llama Stack team from Meta. This...

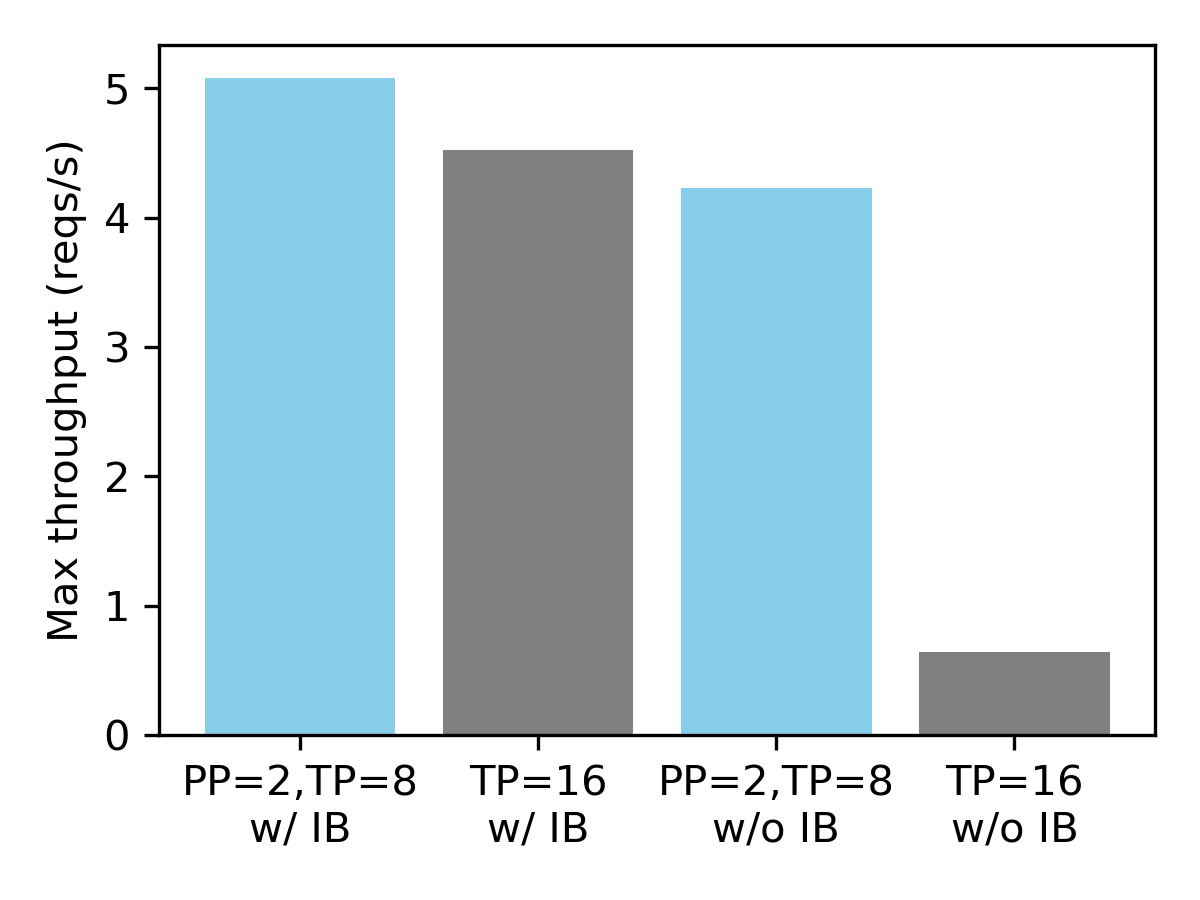

Today, the vLLM team is excited to partner with Meta to announce the support for the Llama 3.1 model series. Llama 3.1 comes with exciting new features with longer context length (up to 128K...