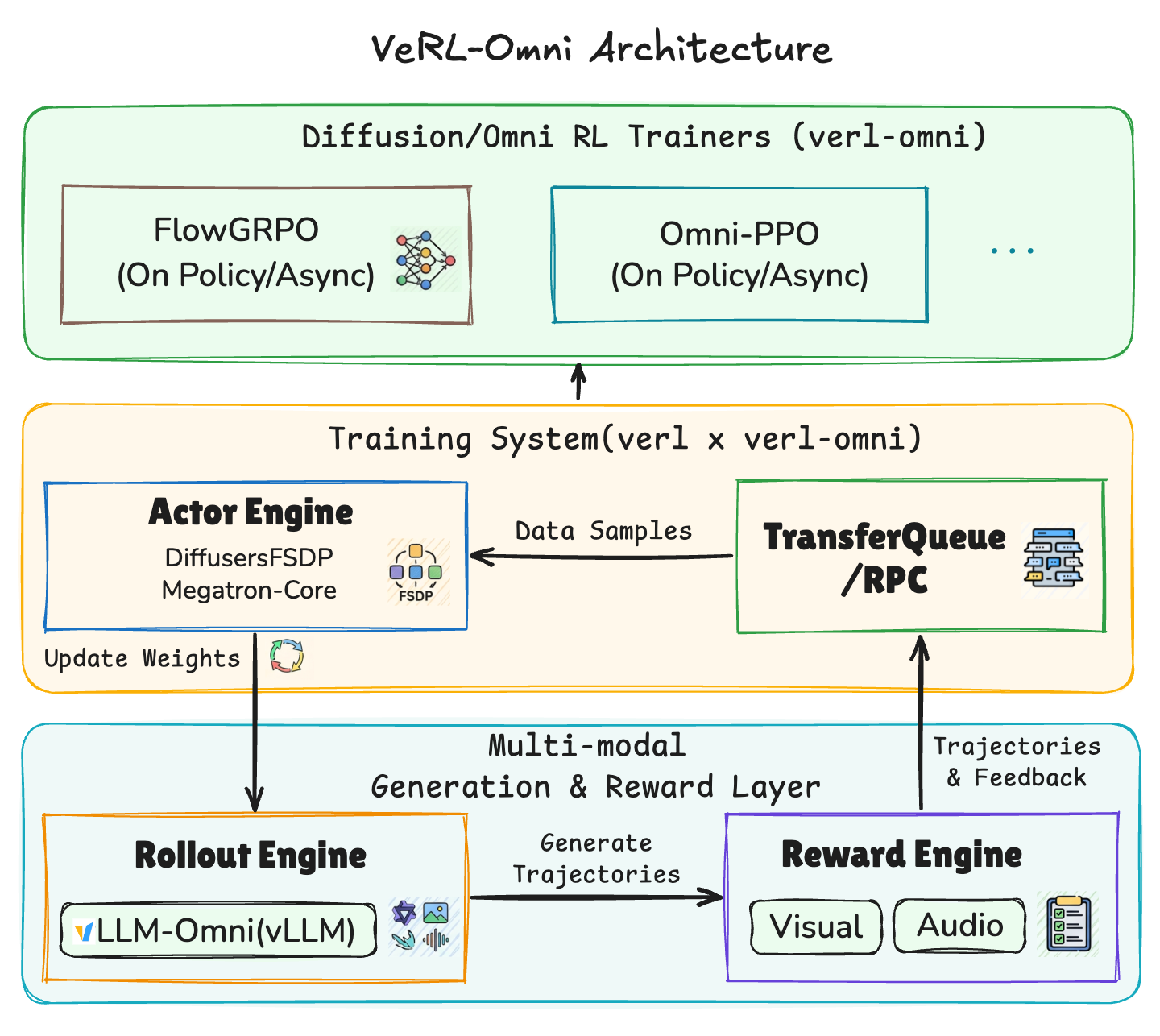

Announcing VeRL-Omni: Easy, Fast, and Stable RL Training for Diffusion and Omni-Modality Models

We are excited to announce the pre-release of VeRL-Omni, a general reinforcement learning (RL) post-training framework focused on multimodal generative models, built on top of verl and vllm-omni.